Seedance 2.0 — The Ultimate AI Video Generator Guide (2026)

Complete guide to Seedance 2.0 by ByteDance — features, prompts, pricing, and how to use it on ZenCreator.pro for unrestricted AI video generation.

Seedance AI is the breakout AI video generator of early 2026. Developed by ByteDance's Seed research team — the same company behind TikTok — Seedance 2.0 launched on February 10, 2026 and immediately became the most talked-about video generation model in the industry. Google Trends shows an 850% spike in searches for "seedance ai" within the first week alone.

What makes it special? Seedance 2.0 generates up to 2K resolution video with native synchronized audio — dialogue, sound effects, ambient noise, and music — all produced in a single generation pass. No stitching, no post-processing. It accepts text, images, video, and audio as inputs simultaneously, making it the first truly multimodal AI video generator available to the public.

TL;DR: Seedance 2.0 is ByteDance's best video AI model yet — 2K resolution, native audio, multi-shot storytelling, and up to 12 reference files per generation. It's accessible through Dreamina (Jimeng AI) and several third-party platforms. Seedance 2.0 is coming soon to ZenCreator — where you'll be able to use it alongside WAN 2.2, custom LoRA models, and unrestricted generation.

What Is Seedance 2.0?

Seedance 2.0 is the third major iteration of ByteDance's video AI model line. Where Seedance 1.0 produced silent 5-second clips and Seedance 1.5 Pro introduced basic audio generation in December 2025, version 2.0 represents a generational leap.

The model uses a Dual-Branch Diffusion Transformer architecture that generates video and audio simultaneously. This means lip-synced dialogue, matched sound effects, and ambient audio are baked into the output — not layered on after the fact. The result is noticeably more coherent than competitors that handle audio as a separate step.

Seedance 2.0 is available through ByteDance's Dreamina platform (previously known as Jimeng AI) and through several third-party integrations. API access has been announced for Q3 2026.

Key Features of Seedance 2.0

Text-to-Video Generation

Write a prompt, get a video. Seedance 2.0 handles complex scene descriptions with multiple characters, camera movements, lighting changes, and emotional performances. Videos can be generated at up to 2K resolution in 16:9, 4:3, 1:1, 3:4, or 9:16 aspect ratios, with durations from 4 to 15 seconds.

Generation times vary from roughly 60 seconds for a standard clip to about 10 minutes for a 15-second video with multiple reference files.

Image-to-Video (Animation)

Upload a still image and Seedance 2.0 will animate it. This works with photos, illustrations, AI-generated images, and even manga panels. The model preserves the visual style and character details of the source image while adding natural motion, camera movement, and audio.

The @ Reference System

This is the headline feature. Seedance 2.0 lets you upload up to 12 reference files — up to 9 images, 3 videos (max 15s each), and 3 audio files (MP3, max 15s each). Each file gets an automatic label like @Image1, @Video1, or @Audio1, and you reference them directly in your prompt.

This unlocks director-level control:

- Character consistency: "@Image1 for the main character's face, @Image2 for the villain's appearance"

- Camera motion transfer: "Follow the camera movement from @Video1"

- Scene/environment: "@Image3 as the background setting"

- Motion choreography: "Copy the dance from @Video2 onto @Image1"

- Audio sync: "@Audio1 as background music, sync movement to the beat"

- Style transfer: "Apply the visual style of @Video1 to @Image1"

Multi-Shot Storytelling

Previous AI video models excelled at single clips but failed at sequences. Seedance 2.0 can generate multi-shot narratives where characters remain visually consistent, camera angles shift naturally, and story beats flow logically. Using the keyword "lens switch" in your prompt signals a cut, creating different shots within one generation while maintaining continuity.

Native Audio Generation

The Dual-Branch architecture produces audio natively alongside video. This includes:

- Dialogue with lip-sync in 8+ languages (English, Chinese, Japanese, Korean, Spanish, French, German, Portuguese)

- Sound effects matched to on-screen action

- Ambient audio appropriate to the scene

- Music that matches the mood and pacing

Technical Specifications

| Spec | Value |

|---|---|

| Max Resolution | 2K |

| Duration | 4–15 seconds |

| Frame Rate | 24 fps |

| Native Audio | Yes (dialogue, SFX, ambient, music) |

| Image Inputs | Up to 9 |

| Video Inputs | Up to 3 (15s max each) |

| Audio Inputs | Up to 3 (MP3, 15s max) |

| Total Reference Files | Up to 12 |

| Aspect Ratios | 16:9, 4:3, 1:1, 3:4, 9:16 |

| Watermark | None |

| Generation Time | ~60s standard, ~10 min complex |

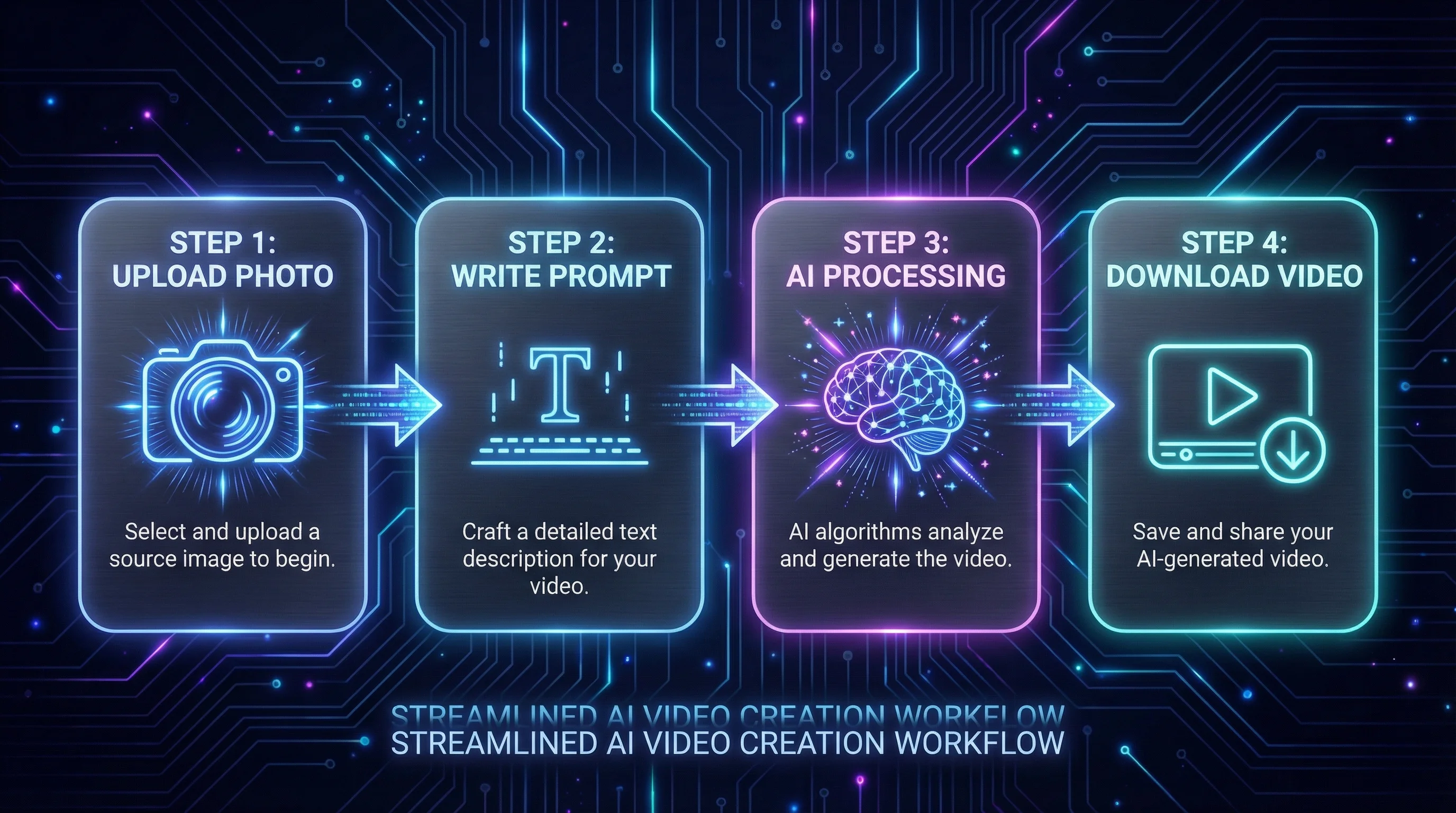

How to Use Seedance 2.0

Step 1: Access the Platform

Seedance 2.0 is available through several platforms:

- Dreamina (dreamina.ai) — ByteDance's official creative platform, formerly known as Jimeng AI

- Third-party integrations — Platforms like EaseMate AI and others offer Seedance 2.0 through their own interfaces

- API — Coming Q3 2026 for developers

Create an account on your chosen platform. Most offer free credits to new users.

Step 2: Choose Your Input Mode

Decide how you want to generate:

- Text-to-Video: Write a text prompt describing your scene

- Image-to-Video: Upload one or more images to animate

- Video-to-Video: Upload existing video for style transfer or modification

- Multi-Reference: Combine images, videos, and audio using the @ reference system

Step 3: Configure Settings

Set your preferred:

- Aspect ratio — Match your target platform (9:16 for TikTok/Reels, 16:9 for YouTube, 1:1 for Instagram)

- Duration — 4 to 15 seconds

- Resolution — Up to 2K

Step 4: Write Your Prompt and Generate

Write a detailed prompt (see examples below), click generate, and wait. Preview the result and download watermark-free.

Seedance Prompts: Best Practices and Examples

Getting great results from Seedance 2.0 requires structured prompting. Here are the key principles and real examples.

Prompt Structure

The most effective Seedance prompts follow this pattern:

- Subject — Who or what is in the scene

- Action — What's happening

- Setting/Environment — Where it takes place

- Camera — Angle, movement, lens type

- Lighting/Mood — Atmosphere and color palette

- Audio cues — Dialogue, sounds, music style

Example Prompts for Different Use Cases

Cinematic Portrait:

A woman with dark curly hair wearing a red silk dress stands on a rooftop

at golden hour. She turns slowly toward the camera with a slight smile.

Shallow depth of field, 85mm lens. Warm amber light catches her hair.

Ambient city sounds below, gentle wind.

Action Sequence:

A parkour runner in a black hoodie sprints across a rain-soaked rooftop

at night. He leaps across a gap between buildings, lands in a roll, and

keeps running. Handheld camera follows from behind, then lens switch to

a low-angle shot as he jumps. Neon reflections on wet surfaces. Sound of

footsteps on concrete, rain, and heavy breathing.

Product Showcase:

A matte black wireless headphone sits on a dark marble surface. The camera

slowly orbits 180 degrees around the product. Soft studio lighting with a

single key light from the left creates dramatic shadows. Minimal ambient

electronic music.

Character Animation (with reference):

@Image1 walks through a snowy forest at twilight. She reaches out to catch

a snowflake, then looks up at the sky with wonder. Slow tracking shot from

the side. Blue-purple ambient light with warm breath visible in cold air.

Crunching snow footsteps, distant wind through pine trees.

Multi-Shot Narrative:

A detective in a trench coat approaches an abandoned warehouse. Close-up of

his hand pushing the door open. Lens switch to wide shot of the dark interior

with a single light hanging from the ceiling. Lens switch to over-shoulder

shot as he sees a mysterious figure in the shadows. Tense orchestral music

builds throughout, creaking door sound, echoing footsteps.

Prompt Tips

- Be specific about motion — "turns slowly to the left" beats "moves"

- Include camera language — "tracking shot," "dolly zoom," "handheld" all work

- Use "lens switch" for multi-shot sequences within one generation

- Reference audio explicitly — The model generates better audio when you describe it

- Specify duration context — For 15-second generations, include enough action to fill the time

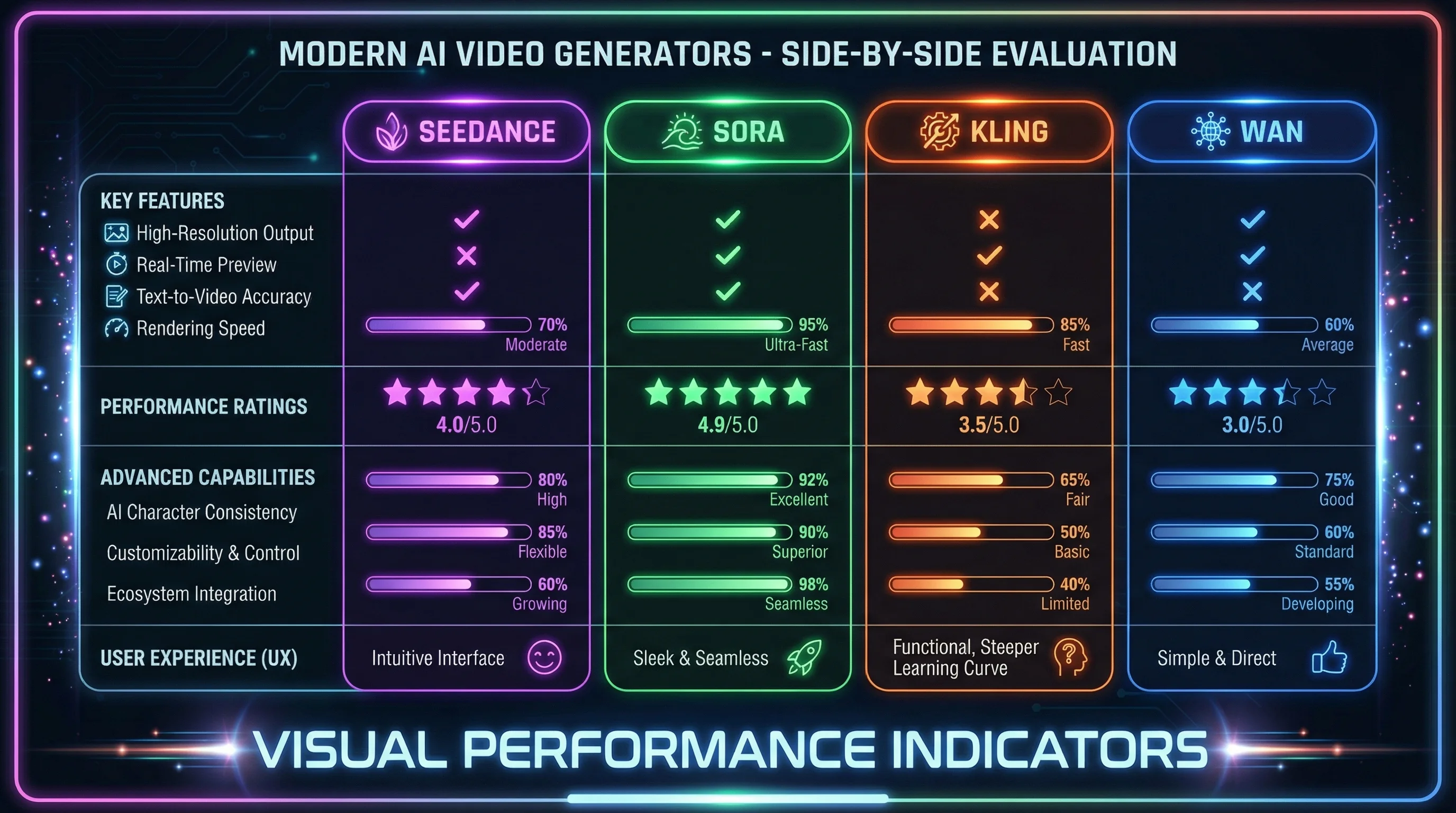

Seedance 2.0 vs Other AI Video Generators

How does Seedance 2.0 compare to the other major players in AI video generation?

| Feature | Seedance 2.0 | Sora (OpenAI) | Kling (Kuaishou) | ZenCreator (WAN 2.2) |

|---|---|---|---|---|

| Max Resolution | 2K | 1080p | 1080p | 1080p |

| Native Audio | ✅ Full | ✅ Limited | ❌ | ❌ (separate audio) |

| Max Duration | 15s | 20s | 10s | 10s |

| Reference Files | Up to 12 | Limited | Up to 4 | Unlimited images |

| Multi-Shot | ✅ | ✅ | ❌ | ❌ |

| Content Restrictions | Moderate | Strict | Moderate | Unrestricted |

| Custom LoRA Support | ❌ | ❌ | ❌ | ✅ |

| Lip-Sync Languages | 8+ | English | Chinese/English | N/A |

| Free Tier | Yes (limited) | Yes (limited) | Yes (limited) | Yes |

| API Available | Q3 2026 | Yes | Yes | Yes |

Seedance 2.0 leads in raw capability — its multimodal reference system and native audio are best-in-class. Sora offers longer clips but with stricter content policies. Kling is competitive but less feature-rich. ZenCreator stands out by offering unrestricted generation with WAN 2.2 + custom LoRA support today, with Seedance 2.0 integration coming soon — making it the only platform combining multiple top-tier models with full creative freedom.

Limitations and Restrictions

Seedance 2.0 is impressive, but it has clear limitations:

Content Moderation

While Seedance 2.0 is more permissive than Western alternatives like Sora, it still applies content filters. ByteDance enforces moderation policies aligned with Chinese and international regulations. Certain types of content — particularly explicit material, violence, and politically sensitive subjects — may be blocked or filtered.

For creators working in fashion, swimwear, artistic nudity, or other boundary-pushing categories, these restrictions can be a significant obstacle.

Duration Limits

At 4–15 seconds per generation, you're still limited to short clips. Creating longer content requires generating multiple clips and editing them together manually. The multi-shot feature helps with narrative continuity, but you can't generate a 60-second video in one pass.

Platform Dependency

Seedance 2.0 is currently locked to ByteDance's ecosystem and approved third-party platforms. There's no self-hosted option, no open-source weights, and the API isn't available yet. Your creative workflow depends entirely on ByteDance's infrastructure and policies.

Inconsistent Availability

Access to Seedance 2.0 varies by region. Some users report geofencing issues, queue times during peak hours, and occasional model downgrades when servers are under heavy load.

No Custom Model Training

You cannot fine-tune Seedance 2.0 on your own data. There's no LoRA support, no DreamBooth integration, and no way to train the model on specific characters, styles, or brand aesthetics. You get what ByteDance offers — nothing more.

Seedance 2.0 on ZenCreator (Coming Soon)

Seedance 2.0 will soon be available on ZenCreator.pro — giving you access to ByteDance's best video model alongside ZenCreator's existing tools and unrestricted generation capabilities.

Why Use Seedance on ZenCreator?

All models in one place. ZenCreator brings together the best AI video models — Seedance 2.0, WAN 2.2, and more — so you can pick the right tool for each project without switching platforms.

No content restrictions. Unlike Dreamina and other platforms, ZenCreator doesn't apply arbitrary content filters. Full creative freedom for fashion, art, experimental, and professional content.

WAN 2.2 + LoRA already available. While Seedance is coming soon, ZenCreator already offers powerful video generation via WAN 2.2 with custom LoRA support — something Seedance doesn't offer natively.

Custom LoRA support. ZenCreator's key differentiator. Apply custom LoRA models to your video generations, enabling:

- Consistent characters trained on specific faces or body types

- Specialized styles — anime, photorealistic, oil painting, cyberpunk, and more

- Brand-specific aesthetics that match your creative identity

- Subject-specific models trained on products, locations, or themes

No other major AI video platform offers LoRA-based customization for video generation.

Accessible pricing. ZenCreator offers a free tier and transparent pricing without the queue times or regional restrictions that affect Seedance 2.0 on other platforms.

Try ZenCreator's AI Video Generator → — WAN 2.2 + LoRA available now, Seedance 2.0 coming soon.

Frequently Asked Questions

What is Seedance AI?

Seedance AI is a family of AI video generation models developed by ByteDance, the parent company of TikTok. The latest version, Seedance 2.0, launched in February 2026 and generates 2K video with native audio from text, image, video, and audio inputs. It's considered one of the most capable AI video generators currently available.

Is Seedance 2.0 free to use?

Seedance 2.0 offers a free tier with limited credits on platforms like Dreamina. Most users receive enough free generations to test the model. For heavy usage, paid plans are available through the various platforms hosting the model. Pricing varies by platform.

How does Seedance compare to Sora?

Seedance 2.0 surpasses Sora in several areas: higher resolution (2K vs 1080p), more comprehensive audio generation, and a more flexible reference system (up to 12 files vs limited). Sora offers longer maximum duration (20s vs 15s) and has a publicly available API. Seedance has more relaxed content moderation than Sora.

Can I use Seedance 2.0 for commercial projects?

Yes, content generated through Seedance 2.0 is generally available for commercial use, though terms vary by platform. Check the specific terms of service for the platform you're using. Output is watermark-free.

What are the best Seedance prompts?

The best Seedance prompts include specific details about subject, action, camera movement, lighting, and audio. Structure your prompts with clear scene descriptions, use camera terminology like "tracking shot" or "dolly zoom," and include audio cues for dialogue and sound effects. Use "lens switch" to create multi-shot sequences. See the prompt examples section above for detailed templates.

Is there an unrestricted AI video generator?

ZenCreator is the leading unrestricted AI video generator. It uses WAN 2.2 for video generation and supports custom LoRA models for specialized styles and characters. Seedance 2.0 is also coming soon to ZenCreator, making it the first platform to offer both Seedance and unrestricted WAN 2.2 + LoRA generation in one place.

Does Seedance 2.0 support custom models or LoRAs?

No. Seedance 2.0 does not support custom model training, LoRA fine-tuning, or any form of personalization beyond its reference file system. If you need custom-trained models for consistent characters or specialized styles, ZenCreator is the only major platform offering LoRA support for AI video generation.

What is ByteDance video AI?

ByteDance video AI refers to the suite of AI video tools developed by ByteDance's Seed research team. This includes the Seedance model family (1.0, 1.5 Pro, and 2.0) as well as internal tools used across TikTok's platform. Seedance 2.0 is the public-facing flagship product of ByteDance's video AI research.